AI in healthcare needs more than hype – it needs quality

10 min read 26 February 2026

Digital and AI innovation is rapidly propelling the healthcare and life sciences industry into a new technological era, where the art of the possible is being continuously reimagined, creating more opportunities to unlock value and ultimately drive better health outcomes for patients. However, this innovation is also shifting the industry into uncharted territories from a regulatory, quality and safety perspective.

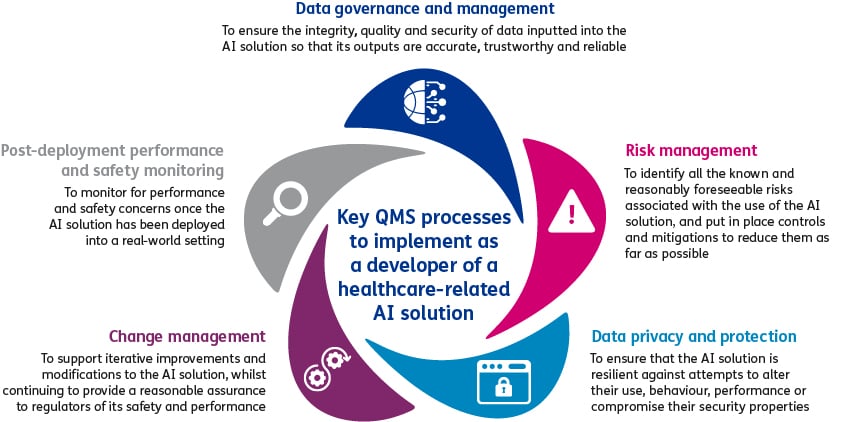

Organisations developing healthcare-related AI solutions, need to have procedures in place for a secure software development lifecycle, covering processes such as design and development and software verification and validation. It is also imperative that whether the AI solution is classified as medical device, organisations implement a robust Quality Management System (QMS), as it is critical for providing assurance to stakeholders that the AI solution safely achieves its intended purpose through its entire lifecycle.

|

Example: ChatGPT Health At the start of 2026, OpenAI launched ChatGPT Health in markets outside of the UK, EU and Switzerland. ChatGPT Health connects data from users’ medical records and health and wellbeing apps to provide the user with more tailored and personalised health information and context. This can include helping users to understand how to interpret test results and preparing for appointments with healthcare professionals. ChatGPT is currently not registered as a medical device in the markets where it is available, as the developer claims that it is not intended to be used for diagnosis or treatment. Despite this claim, it can be strongly argued that ChatGPT Health could be used as a clinical decision support tool and therefore should be regulated as a medical device. Regardless of whether it is classified as a medical device, it cannot be denied that false or misinformation outputted from the AI solution has the potential to cause significant harm to patients. In fact, it was recently reported that an individual developed bromide toxicity after consulting ChatGPT 3.5 for health information and was incorrectly informed that sodium bromide was a viable substitution for sodium chloride (salt).1 This example alone highlights why we strongly recommend manufacturers of healthcare-related AI solutions have a QMS implemented to minimise performance issues that could ultimately impact patient safety. |

International standards, such as ISO 13485:2016, provide a framework for a QMS specifically tailored to organisations developing medical devices, including software and AI enabled devices. We recommend that any organisation developing healthcare-related AI solutions uses this standard when designing their QMS.

Although, from our experience, if an AI solution does not qualify as a medical device, then at a minimum manufacturers should ensure that their QMS includes processes for data management, risk management, change management and post-deployment performance and safety monitoring.

[Click image to enlarge]

Data governance and management

Data quality and integrity are fundamental to the development of healthcare-related AI solutions and ensuring that its outputs are reliable, trustworthy and accurate. The tried and tested saying of ‘junk in equals junk out’ is becoming more paramount with AI. Even well-designed healthcare-related AI solutions can end up being unsuccessful in achieving their purpose, as the data that AI models are developed, trained, validated and/or tested on can have a significant impact on its safety and performance when put into use. If the data is flawed, biased or incomplete, then the AI model outputs will likely be erroneous and unreliable.

The quality of the data inputted into the healthcare-related AI solution when it is in use in the post-deployment setting is equally important for ensuring that it performs consistently throughout its lifecycle and maintains an appropriate level of accuracy and robustness. This is particularly poignant for healthcare-related AI solutions that incorporate machine learning (ML), where the device is able to learn autonomously from the data it receives. The consequences of poor quality data is that it can lead to issues such as ‘algorithm drift’, which is the degradation of the AI model’s performance over time when the real-world data it encounters deviates from the data it was originally trained on, or the perpetuation of inherent bias within the system.

We believe that to address these challenges organisations need to ensure they have a comprehensive data governance framework that systematically addresses data integrity, quality and security at every stage of the data lifecycle. The framework needs to include clearly defined policies and procedures, roles and responsibilities, and the enabling systems and technology.

It is also essential that data collection and management processes are aligned with established data integrity principles, such as ALCOA+, and that robust procedures for data verification and validation are consistently followed.

From our experience, additional practices and mechanisms developers can put in place to enhance the quality of their data include:

- Creating structured data entry and data quality rules, as well as parameters for harmonising data inputs

- Performing regular data audits

- Using agentic AI to perform automated and continuous data quality checks and flag errors and anomalies

Risk management

Healthcare-related AI solutions can be high risk, as they have the potential to pose significant harm to patients’ health, safety and fundamental rights. Risks can occur at any stage during the lifecycle of the AI solution and can emerge from factors related to the design, training, or operation of the AI solution itself. We feel that it is essential for organisations to implement a risk management system that can identify all the known and reasonably foreseeable risks, and put in place controls and mitigations to reduce them as far as possible to an acceptable level where the benefit outweighs the risk.

International standards such as ISO 14971:2019 and NIST AI Risk Management Framework provide frameworks for risk management systems - with ISO 14971:2019 focused on medical device risk management, and NIST framework designed specifically for improving the trustworthiness of all types of AI solutions.

Additionally, identifying and mitigating risks related to human use error, abnormal use, misuse and abuse is also particularly important when it comes to medical devices, regardless of whether it is an AI device. Regulators have been putting significant emphasis on ensuring that organisations identify these types of risks and apply human factors and usability engineering principles to mitigate them.

|

Examples of risks associated with healthcare-related AI solutions One example where risks can emerge is AI hallucination in the outputs from the solution. AI hallucinations are when the AI solution confidently presents erroneous information, which can lead not only to the spread of misinformation but also incorrect decisions taken based on the false information. Hallucinations from healthcare-related AI solutions can lead to clinical safety harm, e.g. false outputs resulting in issues with incorrect treatment being provided to patients or a wrong clinical diagnosis being made. Another example is the risks associated with AI bias and the harm that it can cause, including disparities in the performance of the device in different demographic groups and by amplifying harmful stereotypes. A recent study looked at whether a large language model (GPT-4) encodes racial and gender biases by examining the impact of such biases on four potential applications in the clinical domain - medical education, diagnostic reasoning, clinical plan generation, and subjective patient assessment. The results of the study found that GPT-4 exaggerated known disease prevalence differences between groups and perpetuated stereotypes about demographic groups when providing diagnostic and treatment recommendations.2 |

Data privacy and protection

Organisations must take into consideration not only the clinical safety risks but also the cybersecurity risks and data privacy risks. As healthcare related solutions are likely to be storing, processing and using data that covers both personally identifiable and highly sensitive information, the right to privacy and to protection of personal data must be guaranteed throughout the entire lifecycle of the healthcare-related AI solution.

Cybersecurity is vital to ensuring that healthcare-related AI solutions are resilient against attempts to alter their use, behaviour, performance or compromise their security properties, through exploitation of system vulnerabilities. Cyberattacks can pose serious risks and result in issues such as purposefully feeding the model maliciously modified data (i.e. data poisoning) and access to sensitive training or validation data (i.e. data leakage).

Our view is that organisations should ensure that their risk management system comprehensively identifies and mitigates cybersecurity risks and that their QMS includes well-designed cybersecurity policies, processes and controls.

We recommend that organisations leverage international standards such as ISO 27001 for Information Security Management Systems, and the NIST Cybersecurity Framework. It’s also important to ensure there are processes in place for activities such as threat modelling and cybersecurity testing, which for healthcare-related AI solutions should at a minimum include penetration testing and fuzz testing.

|

What is fuzz testing? Fuzz testing is a software testing technique that inputs large amounts of random or malformed data ("fuzz") into a system to uncover unexpected bugs and vulnerabilities. |

Additionally, we would expect organisations to implement processes to ensure that the relevant data protection laws are upheld. This includes organisations applying the principles of data protection by design and by default and implementing data protection controls and practices designed to ensure that data minimisation, and all relevant minimisation techniques, are fully considered starting from the design phase of AI device lifecycle.

Change management

As mentioned earlier in the article, healthcare-related AI solutions and particularly those that adopt ML present unprecedented regulatory challenges as these AI solutions are characterised as having the ability to automatically and continuously re-train based on data or experience, with or without being explicitly programmed. The impact of this is that these dynamic AI solutions possess inherent variability and unlike other types of healthcare technology, which typically undergo limited substantial updates, the predictability and consistency in the performance and characteristics of the AI/ML solution once it has been approved and placed on the market cannot always be determined.

To address these challenges, there is a growing understanding that regulatory frameworks need to evolve and embed more flexibility that allows for shifting away from one-time approval of the AI solution itself and placing stronger emphasis on regulating the QMS underpinning the entire lifecycle of the AI solution. This helps to give confidence that organisations are putting the appropriate guardrails in place to guarantee sustained safety and performance of their AI solution throughout its lifecycle.

One way in which regulators are trying to re-shape regulations to support rapid innovation is by introducing approaches to allow organisations to make changes and modifications to their AI/ML medical device without having to continuously seek regulatory approvals. The FDA, MHRA and Health Canada3 recently made recommendations for organisations developing AI/ML devices to proactively develop a Predetermined Change Control Plan (PCCP), to be reviewed and agreed upon by the regulators as part of the initial device regulatory submission. The purpose of the PCCP is to support iterative improvements and modifications to the device, whilst continuing to provide a reasonable assurance to regulators of its safety and performance without having to continuously seek approval for the changes made.

|

What should be included in a PCCP?4 A PCCP should include:

|

From our experience, implementing robust change control processes within your QMS is essential. This becomes especially important when utilising a mechanism which has gained regulatory approval, such as the PCCP, to manage changes after deployment, ensuring every modification adheres to regulatory standards.

Post-deployment performance and safety monitoring

The unique nature and challenges of healthcare-related AI solutions mean that once placed on the market and deployed in a real-world setting, organisations will need to be even more diligent with their post-deployment monitoring and vigilance activities to make sure that they are identifying and addressing performance and safety concerns. Organisations with healthcare-related AI solutions will particularly want to monitor for reproducibility concerns, algorithm drift, model degradation and unintended bias.

Organisations are required by regulators to establish and implement a post-deployment monitoring and vigilance system within their QMS. The system should include processes for:

- Developing and implementing post-deployment monitoring plans

- Continuously and systematically collecting data on AI solution performance and safety-related issues and incidents

- Analysing and reporting post-deployment monitoring data and insights

- Reporting safety-related issues and incidents to regulators (if applicable)

Conclusion

In conclusion, establishing a comprehensive and well-functioning QMS is vital when it comes to the development and deployment of healthcare-related AI solutions and for ensuring sustained performance, patient safety and trust with stakeholders. Organisations should also implement strong process governance and oversight to maintain the effectiveness of these processes and controls. Without a robust QMS in place, organisations risk significantly minimising the true potential that healthcare-related AI solutions can offer in advancing patient care.

How Baringa can help you unlock the foundations that drive the safety and performance of your healthcare-related AI solution

At Baringa, we leverage our deep expertise across a breadth of areas including QMS, Data and AI, digital health and medical devices, risk management, and cybersecurity to help our clients design and implement industry-leading QMSs that are fit-for-purpose and underpin the safe development and deployment of healthcare-related AI solutions.

We can partner with you to:

- Evaluate whether your AI product meets the criteria for a medical device across global markets and guide you in formulating regulatory and quality strategies.

- Review your current QMS or business processes to spot deficiencies and recommend necessary improvements.

- Design and implement a tailored QMS that enables you to demonstrate the quality of your AI solution to relevant stakeholders.

- Establish a comprehensive data strategy and governance framework.

- Foster organisational culture change to facilitate QMS adoption and embrace new ways of working.

Baringa empowers clients to unlock the full potential of their AI solutions, safeguarding patient outcomes while meeting industry standards. To find out more, please get in touch with our Pharmaceutical and Life Sciences team members: Chris Maxted, Hakim Yadi, or Demi Dabor-Alloh.

References

- A Case of Bromism Influenced by Use of Artificial Intelligence, Annals of Internal Medicine: Clinical Cases (https://www.acpjournals.org/doi/10.7326/aimcc.2024.1260)

- Assessing the potential of GPT-4 to perpetuate racial and gender biases in health care: a model evaluation study, The Lancet Digital Health (https://www.thelancet.com/journals/landig/article/PIIS2589-7500(23)00225-X/fulltext)

- Predetermined change control plans for machine learning-enabled medical devices: guiding principles, MHRA (https://www.gov.uk/government/publications/predetermined-change-control-plans-for-machine-learning-enabled-medical-devices-guiding-principles/predetermined-change-control-plans-for-machine-learning-enabled-medical-devices-guiding-principles)

- Marketing Submission Recommendations for a Predetermined Change Control Plan for Artificial Intelligence-Enabled Device Software Functions, FDA (https://www.fda.gov/media/166704/download)

Our Experts

Related Insights

Reimagining a critical R&D process through AI

A top 10 global biopharmaceutical company partnered with Baringa to address a challenge familiar across R&D.

Read more

Transformation of QMS: why evolution is inevitable and urgent

The pharmaceutical industry is experiencing a rapid acceleration in the adoption of artificial intelligence (AI), automation and advanced digital technologies across R&D.

Read more

Enabling commercial agility in medical device manufacturing

A global medical device company was operating with limited visibility across its commercial and operational functions.

Read more

A focused integrated evidence planning approach that identified over >£10m of savings in one year

Our client was a leading global pharmaceutical company with an ambitious 5-year pipeline of launches that required a cross-functional step-change in how they approached evidence generation.

Read moreIs digital and AI delivering what your business needs?

Digital and AI can solve your toughest challenges and elevate your business performance. But success isn’t always straightforward. Where can you unlock opportunity? And what does it take to set the foundation for lasting success?